Following this week’s announcement, some experts think Apple will soon announce that iCloud will be encrypted. If iCloud is encrypted but the company can still identify child abuse material, pass evidence along to law enforcement, and suspend the offender, that may relieve some of the political pressure on Apple executives.

It wouldn’t relieve all the pressure: most of the same governments that want Apple to do more on child abuse also want more action on content related to terrorism and other crimes. But child abuse is a real and sizable problem where big tech companies have mostly failed to date.

“Apple’s approach preserves privacy better than any other I am aware of,” says David Forsyth, the chair of the computer science department at the University of Illinois Urbana-Champaign, who reviewed Apple’s system. “In my judgement this system will likely significantly increase the likelihood that people who own or traffic in [CSAM] are found; this should help protect children. Harmless users should experience minimal to no loss of privacy, because visual derivatives are revealed only if there are enough matches to CSAM pictures, and only for the images that match known CSAM pictures. The accuracy of the matching system, combined with the threshold, makes it very unlikely that pictures that are not known CSAM pictures will be revealed.”

What about WhatsApp?

Every big tech company faces the horrifying reality of child abuse material on its platform. None have approached it like Apple.

Like iMessage, WhatsApp is an end-to-end encrypted messaging platform with billions of users. Like any platform that size, they face a big abuse problem.

“I read the information Apple put out yesterday and I’m concerned,” WhatsApp head Will Cathcart tweeted on Friday. “I think this is the wrong approach and a setback for people’s privacy all over the world. People have asked if we’ll adopt this system for WhatsApp. The answer is no.”

WhatsApp includes reporting capabilities so that any user can report abusive content to WhatsApp. While the capabilities are far from perfect, WhatsApp reported over 400,000 cases to NCMEC last year.

“This is an Apple built and operated surveillance system that could very easily be used to scan private content for anything they or a government decides it wants to control,” Cathcart said in his tweets. “Countries where iPhones are sold will have different definitions on what is acceptable. Will this system be used in China? What content will they consider illegal there and how will we ever know? How will they manage requests from governments all around the world to add other types of content to the list for scanning?”

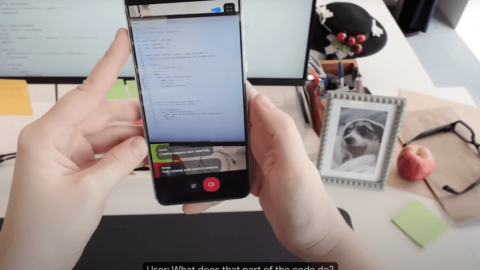

In its briefing with journalists, Apple emphasized that this new scanning technology was releasing only in the United States so far. But the company went on to argue that it has a track record of fighting for privacy and expects to continue to do so. In that way, much of this comes down to trust in Apple.

The company argued that the new systems cannot be misappropriated easily by government action—and emphasized repeatedly that opting out was as easy as turning off iCloud backup.

Despite being one of the most popular messaging platforms on earth, iMessage has long been criticized for lacking the kind of reporting capabilities that are now commonplace across the social internet. As a result, Apple has historically reported a tiny fraction of the cases to NCMEC that companies like Facebook do.

Instead of adopting that solution, Apple has built something entirely different—and the final outcomes are an open and worrying question for privacy hawks. For others, it’s a welcome radical change.

“Apple’s expanded protection for children is a game changer,” John Clark, president of the NCMEC, said in a statement. “The reality is that privacy and child protection can coexist.”

High stakes

An optimist would say that enabling full encryption of iCloud accounts while still detecting child abuse material is both an anti-abuse and privacy win—and perhaps even a deft political move that blunts anti-encryption rhetoric from American, European, Indian, and Chinese officials.

A realist would worry about what comes next from the world’s most powerful countries. It is a virtual guarantee that Apple will get—and probably already has received—calls from capital cities as government officials begin to imagine the surveillance possibilities of this scanning technology. Political pressure is one thing, regulation and authoritarian control are another. But that threat is not new nor is it specific to this system. As a company with a track record of quiet but profitable compromise with China, Apple has a lot of work to do to persuade users of its ability to resist draconian governments.

All of the above can be true. What comes next will ultimately define Apple’s new tech. If this feature is weaponized by governments for broadening surveillance, then the company is clearly failing to deliver on its privacy promises.

Recent Comments